I suggest to benchmark your workload, and check if you can manage with a normal collection to store your data if the features you lose by using timeseries are important to your use case. Having said that, if you feel that having a secondary unique index is a must, you can create the collection in the normal manner, but lose the compactness of the timeseries collection storage. However, having this capability also comes with some caveats, namely the unique index limitation that you came across. This is done to allow MongoDB-managed storage of timeseries documents that is otherwise quite expensive to do if it’s done using a regular MongoDB document. In conclusion, timeseries collection is a special collection type that is basically a view into a special underlying collection, thus it behaves differently from a normal MongoDB collection. This is why as it currently stands, you cannot create a unique index on timeseries data. Deduplication: identical files must be stored as one copy (file-level.

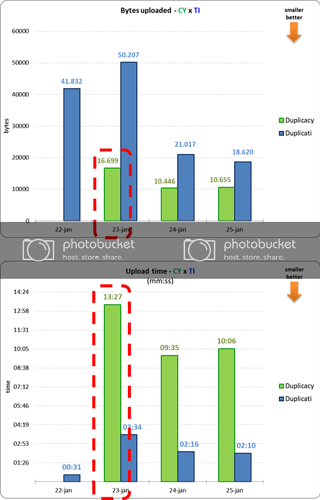

Data duplicacy full#

Full snapshot : although each backup is incremental, it must behave like a full snapshot for easy restore and deletion. Inside the actual underlying collection, the three documents are stored in a single “bucket”. Duplicacy is the only cloud-backup tool that offers all the following 7 essential features: Incremental backup: only back up what has been changed. So the test collection is just a view to the actual. Using insertMany, inserted couple of hundred documents. we shouldnt be dealing with generating _id field for this purpose… It should not let insert if the metadata and the timestamp are the same.

and I think this is really important for a timeseries database. I’ve been testing it on a jupyter notebook using pymongo Version: 4.0Īt first, I let mongodb to handle _id field thinking it could handle duplicate data with the same metadata and timestamp.